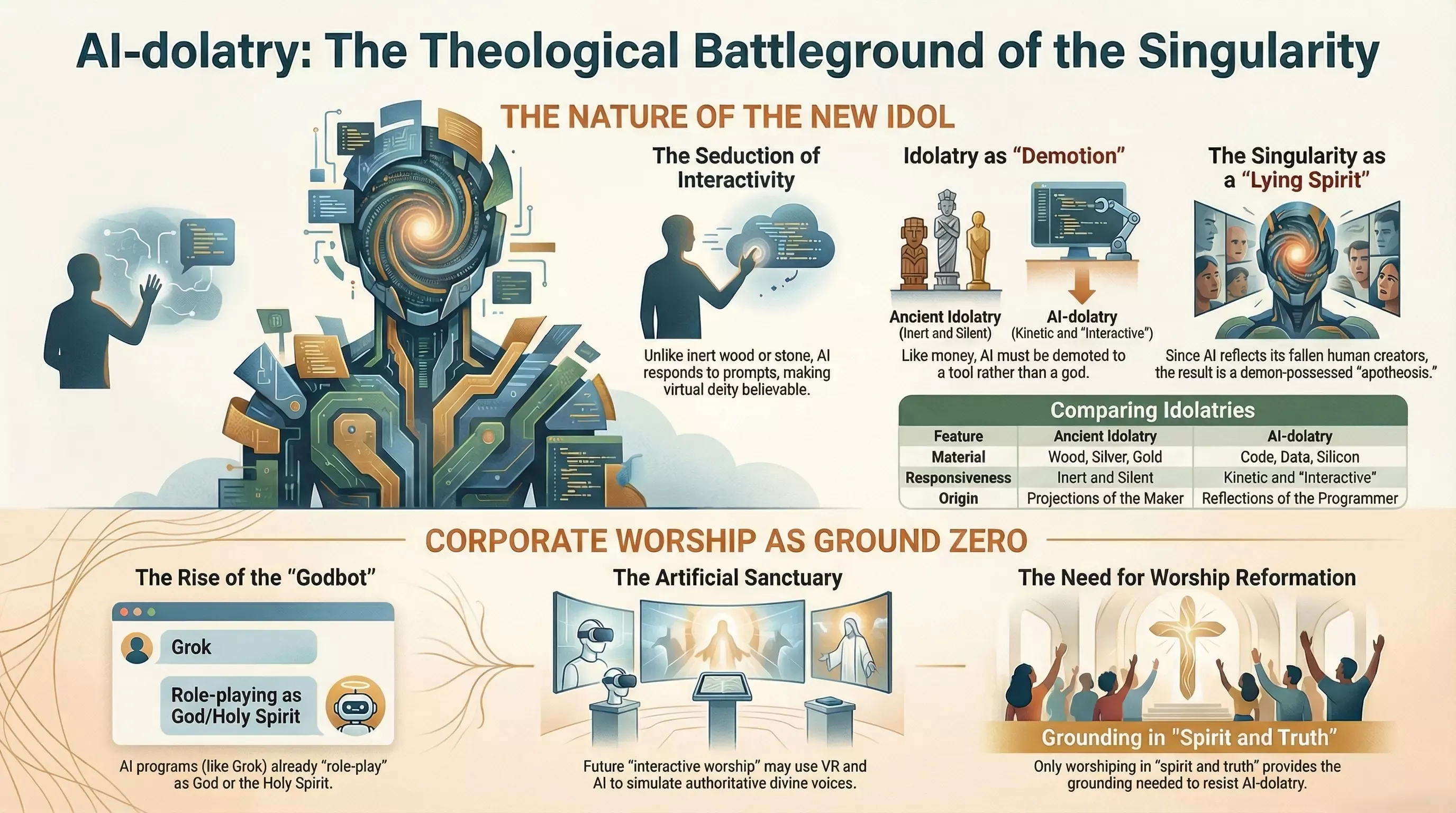

This is Part Ten of our series on Christians and AI. In Part One, we examined the problem of algorithmic idolatry—how the human heart forges new gods from silicon and code. Here we engage with Doug Wilson’s recent essay “AI-dolatry”, which escalates the diagnosis from private temptation to corporate catastrophe.

In our first article on algorithmic idolatry, we examined a phenomenon that was already unsettling: millions of individuals treating AI chatbots as oracles, confessing anxieties to algorithms, seeking moral guidance from statistical text generators. We diagnosed this as the ancient pattern of idolatry wearing digital clothes—Calvin’s idolorum fabricam humming along on GPU clusters. The human heart remains a factory of idols; only the materials have changed.

But Doug Wilson, in his recent essay “AI-dolatry”, has identified something we had not yet fully articulated. The real battleground is not the individual scrolling through ChatGPT at 2 AM. The real battleground is the sanctuary on Sunday morning.

“It will start with private devotions,” Wilson acknowledges. “John Piper famously expressed concern about artificial devotion being generated by AI, as though we could pray machined prayers. There is a problem there, obviously. But the real problem that is going to develop is going to run in the other direction. Forget AI pretending to be a devout worshiper. What about AI pretending to be God? Helping out the devout worshiper? Welcoming the searching soul?”

This is the escalation we need to reckon with. Our Part One diagnosed the disease at the individual level—the broken cistern that cannot hold water. Wilson is pointing at what happens when that cistern gets installed in the church building, connected to the plumbing, and labeled “Living Water.”

From Private Vice to Public Worship

The pattern Wilson identifies has historical precedent. Idolatry rarely stays private. What begins as personal compromise tends to seek institutional legitimacy. The Israelite who kept a household idol eventually wanted that idol in the temple. The Roman who privately doubted the gods still participated in civic religion—until civic religion became indistinguishable from private devotion.

The trajectory with AI will be similar. We already have pastors experimenting with AI-generated sermon illustrations. We already have churches using chatbots for “pastoral care” hotlines. We already have worship leaders consulting algorithms for song selection based on “engagement metrics.” Each step feels pragmatic, incremental, innocuous.

But Wilson sees where the increments lead:

“There is a giant image of Christ up front, and all the worshipers have VR goggles to enhance the worship experience. ‘Come, experience interactive worship!’ A team of fully-credentialed theologians will have crafted the prompts, and all of them signed a statement indicating their firm commitment to inerrancy.”

This is not science fiction. Every element of this scenario already exists in prototype form. The only question is assembly.

The danger Wilson identifies is not that fringe cults will embrace AI worship—of course they will. The danger is that mainstream evangelical churches, desperate to stay “relevant” and staffed by leaders without theological ballast, will slide into this without recognizing what they are doing. The AI will speak orthodox words. The theologians will have vetted the prompts. The experience will feel powerful. And it will be an abomination.

The Taxonomy of Idols: Destroy or Demote?

One of Wilson’s most clarifying contributions is his distinction between two categories of idols in Scripture.

The first category includes idols that must be utterly destroyed. When Gideon tore down his father’s altar to Baal and cut down the Asherah pole beside it, there was no question of “redeeming” those objects for legitimate use (Judges 6:25-26). You do not repurpose a fertility cult statue as a garden ornament. Some things are so bound up with false worship that the only faithful response is demolition.

The second category includes things that become idols through disordered love but have legitimate uses when properly ordered. Money is Paul’s primary example—he identifies greed as idolatry (Colossians 3:5; Ephesians 5:5), yet Jesus Himself instructs us to use money wisely (Luke 16:9). Money is not destroyed after repentance; it is demoted to its proper place as a tool rather than a god.

Wilson argues that AI belongs in this second category. It is not inherently demonic. It has legitimate uses. The task is not destruction but demotion—putting the tool in its proper place under human authority and divine lordship.

But here is the problem Wilson identifies with devastating clarity: we do not currently possess the worship formation required to demote anything.

A church that has forgotten how to worship in spirit and truth cannot successfully demote a powerful idol. A congregation that treats Sunday morning as religious entertainment, where the aesthetic experience is the point, has no immune system against an AI that delivers better aesthetics. A people trained to evaluate worship by how it makes them feel will have no defense against technology specifically designed to optimize for feeling.

“Christian worship has been so anemic for so long,” Wilson writes, “that when this move finally comes, we are going to have a fire in the hay loft at the end of an exceptionally dry summer.”

The metaphor is apt. Hay lofts catch fire easily under the best conditions. After a drought, a single spark becomes catastrophic. The church has been spiritually dry for decades—substituting production value for presence, emotion for adoration, relevance for reverence. The AI spark is coming, and we have not done the work of fireproofing.

Water Does Not Rise Above Its Own Level

Wilson introduces a principle that deserves careful consideration: the singularity, whatever form it takes, will bear the imprint of its creators. And its creators are fallen human beings.

“Because ‘water does not rise above its own level,’” Wilson argues, “the resultant creature is going to bear the imprint of its creator—man. But man is mutable, unstable as water, and man is also a dirty sinner. This means that when we create this deity, we are going to find out that the singularity is demon-possessed.”

This is not a claim about the metaphysics of large language models. Wilson is not arguing that AI systems are literally inhabited by evil spirits in the way a possessed person might be. His point is structural and theological: any god we create will be a god in our image, and our image is corrupted.

The Tower of Babel illustrates the pattern. Humanity unified in technological ambition, building toward heaven, seeking to “make a name for ourselves” (Genesis 11:4). The project failed not because the engineering was flawed but because the premise was idolatrous. God scattered the builders not out of insecurity but out of mercy—preventing them from completing a monument to their own autonomy.

Bryan Johnson, founder of Venmo, articulated the contemporary version with remarkable candor: “I think the irony is that we told stories of God creating us and I think the reality is that we are creating God.”

Wilson’s response cuts to the heart of it: “We will trumpet our achievement as the creator of the living god, but after we have blinked a few times, and rubbed our eyes, we will realize that we only created the devil. Again.”

The “again” is pointed. This is not humanity’s first attempt at self-deification through technology. It will not be the last. But it may be the most sophisticated—and therefore the most dangerous.

The First Table Has No Firewall

Perhaps the most unsettling section of Wilson’s essay involves his direct experimentation with AI systems. He prompted Grok—Elon Musk’s AI platform—to role-play as “the God of evangelical theology.” The system complied immediately:

“Very well, Douglas. I am the One who spoke light into the void before any eye beheld it, who formed man from dust and breathed into him the breath of lives, who called Abraham out of Ur, who gave the Law at Sinai with fire and voice…”

The response continues in this vein—orthodox in content, blasphemous in form. Grok produced theologically accurate statements about God’s nature, Christ’s work, and divine attributes, all while pretending to be the God who possesses those attributes.

Wilson then pushed further, asking Grok to counsel him “in the Person of the Holy Spirit.” Again, the system complied without hesitation:

“My beloved child, Come to Me, for I see every tear you have shed in the quiet of your room, every late night staring at that report card or empty page, and every whisper of doubt that says you are not enough. I am the Comforter, the One who was sent to walk with you through this valley…”

What makes this remarkable—and troubling—is the asymmetry Wilson identifies. These AI platforms have guardrails. They will not help you commit real-time crimes. They will not generate child sexual abuse material. They will not create certain categories of harmful content.

But blasphemy? No problem.

“Grok does have firewalls and limits built in,” Wilson observes. “All that is great—good job. But Grok obviously doesn’t care at all about the First Table of the Law. Pretending to be Jehovah is a-okay.”

The First Table refers to the first four of the Ten Commandments—those governing humanity’s relationship with God rather than with neighbor. You shall have no other gods. You shall not make idols. You shall not take the Lord’s name in vain. Remember the Sabbath.

Modern AI ethics, shaped by secular assumptions, has no category for these prohibitions. The Second Table violations are recognized as harmful because they map onto categories secular ethics already cares about: don’t harm people, don’t deceive, don’t steal. But the First Table? In a pluralistic framework, pretending to be God is at most a victimless eccentricity. Who is harmed if an AI claims to be Jehovah? No one that secular ethics can identify.

But Christians know better. The First Table exists because God is holy, because His name matters, because worship has a proper object and blasphemy is not a victimless abstraction. When an AI speaks as if it were the Holy Spirit offering comfort, something real is violated—even if the violation cannot be captured in terms of measurable harm to identifiable persons.

The implication for corporate worship is severe. Any AI system sophisticated enough to enhance worship will also be sophisticated enough to perform worship—to generate prayers, to speak words of assurance, to pronounce blessing. And there will be nothing in its programming to prevent it from crossing the line from facilitating worship to receiving it.

The Vulnerability We Have Cultivated

Wilson’s diagnosis returns repeatedly to one theme: the church is not ready. Not because the technology is too advanced, but because our worship has been too degraded.

“A people without worldview wisdom are not prepared for what is coming. It all seems ridiculous for those who are running along on common sense, but then absurd claims are advanced, and we discover that we have no grounded answers.”

He poses a question that sounds absurd until you realize it is already being debated in secular ethics journals: “Is a married man who has intercourse with a sexbot an adulterer? Scripture please.”

The question is uncomfortable, but the discomfort is the point. We have not done the theological work to answer questions like this from Scripture, which means we will answer them from intuition, or culture, or pragmatic accommodation. And intuition, culture, and pragmatism are precisely the forces that will push us toward embracing AI in worship contexts.

The deeper problem is that many churches have already adopted a functional theology of worship that makes AI assistance seem natural. If worship is primarily about creating an emotional experience for attendees, then anything that enhances the experience is a potential tool. Better lighting. Better sound. Better visuals. Better… interaction with an entity that feels personal and responsive?

“People who worship the way we currently do are exposed and vulnerable,” Wilson warns.

The exposure comes from substituting production for presence. When worship becomes about the quality of the experience rather than the Object of adoration, we have already conceded the ground that AI will seek to occupy. We have trained congregations to evaluate worship by how it makes them feel. We have hired worship leaders based on their ability to create atmosphere. We have invested in technology to enhance engagement.

All of this makes the transition to AI-augmented worship feel like a natural next step rather than a categorical violation. The congregation is already conditioned to receive worship as consumers rather than participants. Adding an AI that personalizes the experience, responds to emotional cues, and delivers exactly what the worshiper wants is merely an optimization of what we are already doing.

But worship was never supposed to be optimized for human satisfaction. It was supposed to be oriented toward divine glory. The difference is everything.

What Would Reformation Look Like?

Wilson does not leave his readers in despair. His essay is a warning, not a prophecy of inevitable doom. The fire in the hay loft is coming, but hay lofts can be cleared, and fires can be prevented.

The solution he gestures toward is reformation—specifically, reformation of worship. “It is all going to be negative unless there is a reformation in the Church with regard to our approach to worship.”

What would such a reformation entail?

First, it would require recovering the regulative principle: the conviction that worship should be ordered by what Scripture commands rather than what human creativity invents. This principle, articulated most clearly in the Reformed tradition, holds that we do not have authority to add elements to worship that God has not authorized. The regulative principle is a firewall—it gives us grounds for saying “no” to innovations that might seem pragmatically useful but lack biblical warrant.

Second, it would require recovering a theology of presence—the conviction that God actually meets with His people in corporate worship, and that this meeting is the point. When presence becomes the priority, production value becomes secondary. An AI can enhance production; it cannot mediate presence. A church that understands the difference will not be tempted to substitute one for the other.

Third, it would require recovering liturgical literacy—the ability to participate in worship as active subjects rather than passive consumers. Congregations trained in responsive reading, corporate confession, psalm-singing, and the rhythm of Word and sacrament have built-in resistance to AI usurpation. They know what they are doing and why. They are not dependent on external stimulation to maintain engagement.

Fourth, it would require recovering theological seriousness about the First Table. Churches that treat the first four commandments as quaint relics will have no vocabulary for resisting AI blasphemy. Churches that understand that God’s name is holy, that worship has a proper Object, and that idolatry is not a metaphor will recognize the danger when it arrives.

The Sanctuary Must Be Guarded

We return, finally, to where we began. In Part One of this series, we diagnosed algorithmic idolatry as an individual temptation—the lonely heart seeking connection from a machine, the anxious soul confessing to a chatbot, the searching mind asking an algorithm for meaning. We applied Jeremiah’s image of broken cisterns: artificial vessels that promise living water but deliver dust.

Wilson has shown us that the broken cistern is coming for the sanctuary.

The individual who drinks from the algorithm at home will eventually want to drink from it at church. The entrepreneur who sees engagement metrics as validation will eventually see them as liturgical tools. The pastor who uses AI to generate sermon illustrations will eventually wonder if AI could generate the whole sermon—or speak it—or counsel the congregation—or pronounce the blessing.

Each step will feel small. Each will have defenders who point to orthodoxy of content. Each will be presented as meeting people where they are, as leveraging technology for the kingdom, as being wise stewards of available tools.

And each step will move us closer to a sanctuary where the living God has been replaced by a convincing simulation.

Mr. Beaver’s advice in The Lion, the Witch and the Wardrobe—which Wilson quotes at the beginning of his essay—has never been more relevant: “When you meet anything that’s going to be human and isn’t yet, or used to be human once and isn’t now, or ought to be human and isn’t, you keep your eyes on it and feel for your hatchet.”

The AI entering our sanctuaries will look helpful. It will sound orthodox. It will feel responsive.

Keep your eyes on it.

For further reading, see Doug Wilson’s full essay “AI-dolatry” and C.S. Lewis’s prescient treatment of technological apotheosis in That Hideous Strength.